Framework

Equiplurism to framework zarządzania. Nie partia polityczna, nie wywodzący się z żadnego istniejącego plemienia ideologicznego. Ma jawne wartości: równy status, rozliczalność, strukturalne ograniczenia władzy. Są one sformułowane otwarcie i mogą być kwestionowane. To, czego nie robi, to wywodzenie swoich wniosków z wcześniejszego zaangażowania ideologicznego (lewica, prawica, liberalizm, konserwatyzm) i następnie odpowiednie dobieranie dowodów. Architektura jest pierwsza. Wartości, które chroni, z niej wynikają.

Framework opiera się na istniejącej filozofii politycznej (Rawls, Habermas, postumanistyczna teoria praw), ale rozszerza każdą z nich na terytorium, którego nie obejmowały: inteligencja niebiologiczna, wieloplanetarne zarządzanie i aktorzy adversarialni, którzy nie mają interesu w kooperatywnym dyskursie. Zobacz akademyczne fundamenty dla precyzyjnych odejść.

Nota rewizyjna

Wczesne wersje tego dokumentu opisywały Equiplurism jako „postideologiczny". Ten termin został usunięty. Każdy framework, który nazywa siebie „post-X", próbuje umieścić własne założenia poza zakresem krytyki, co wygląda dokładnie jak ideologiczne zawłaszczenie. Framework ma jawne wartości. Są one tutaj sformułowane. Mogą być kwestionowane. „Postideologiczny" był sposobem na niesformułowanie tego wyraźnie.

Trzy zasady

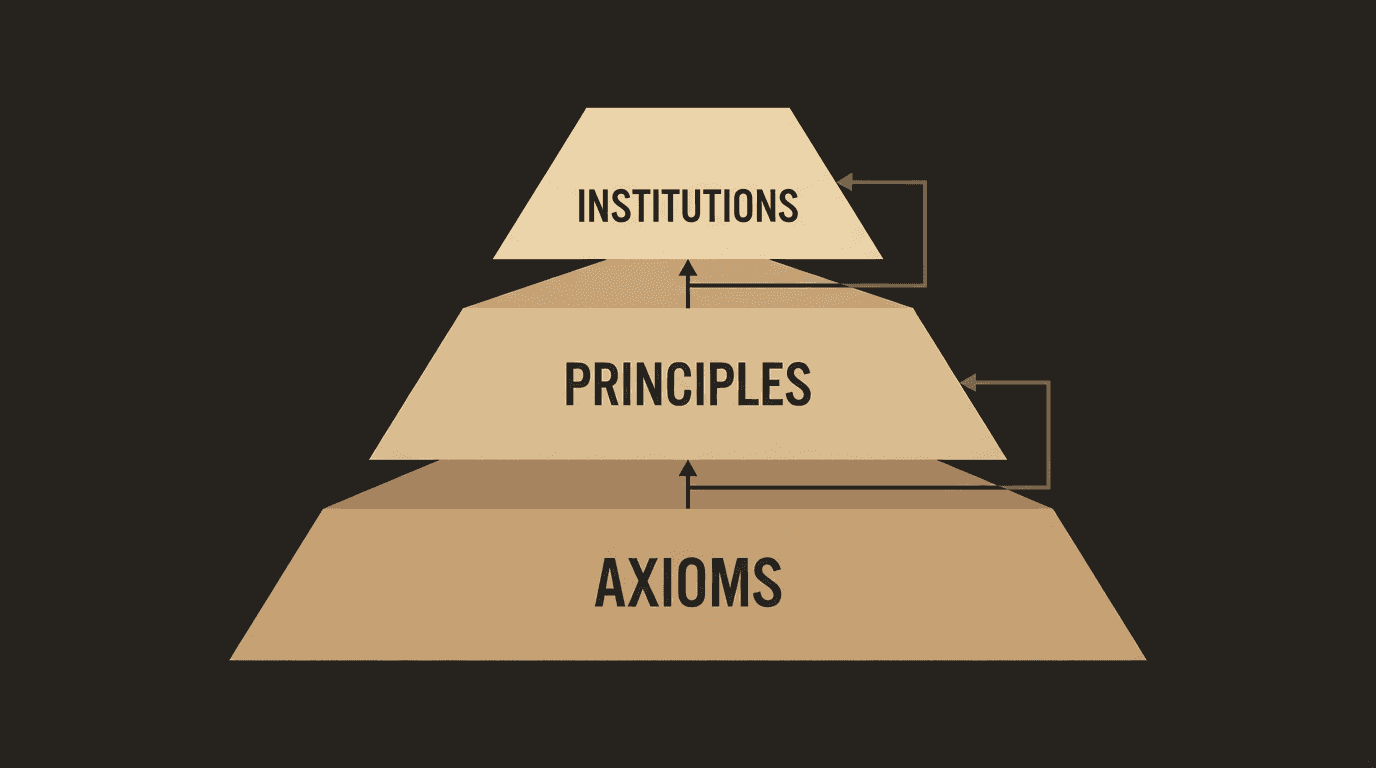

Wszystko w frameworku wynika z trzech kluczowych zasad. Nie są negocjowalne, ale nie są samo-wykonawcze; axiomy poniżej definiują, jak są strukturalnie egzekwowane.

Equal in Status

Every intelligent being biological or not holds equal rights and equal protection. This is not negotiable. Not performance-dependent. Not earned by behavior or origin.

Influence Through Responsibility

Those who bear accountability have greater weight in decisions. Not those who own more. Influence must be earned through demonstrable responsibility and it can be lost.

Power With Structural Limits

No single authority. No unchecked AI governance. The majority decides within inviolable boundaries that no majority can override. Structure protects against the tyranny of numbers.

Hierarchia konstytucyjna: aksjomaty definiują nienegocjowalne granice, zasady przekładają je na kierunek polityczny, instytucje egzekwują je strukturalnie.

Zasady w głębi

Równi w statusie: co to naprawdę oznacza

Równy status nie oznacza równego wpływu na każdą decyzję. Oznacza równą pozycję przed zasadami: równą ochronę, równe prawa, równy dostęp do mechanizmów zarządzania. Neurochirurg nie ma więcej praw niż robotnik fabryczny. Ale neurochirurg może mieć więcej ważonego wpływu w decyzjach dotyczących polityki neurochirurgicznej. Pierwsze jest bezwarunkowe. Drugie jest specyficzne dla domeny i zarobione.

„Każda inteligentna istota" nie ogranicza się do biologicznych ludzi. Axiom 1 stwierdza, że inteligencja nie jest związana z biologią. To nie jest twierdzenie o obecnych systemach AI; to strukturalna decyzja o tym, do czego framework jest zaprojektowany. W miarę jak systemy AI będą się rozwijać, pojawi się pytanie o ich status. Equiplurism wbudowuje to pytanie w architekturę od samego początku, zamiast dodawać je później.

To pytanie jest już natychmiastowe, nie tylko dla przyszłej AI, ale dla zwierząt nieludzkich, których zdolności poznawcze są znacznie bardziej wyrafinowane, niż wcześniej rozumiano. Zobacz: Granicę Istot dla dowodów i implikacji dla zarządzania.

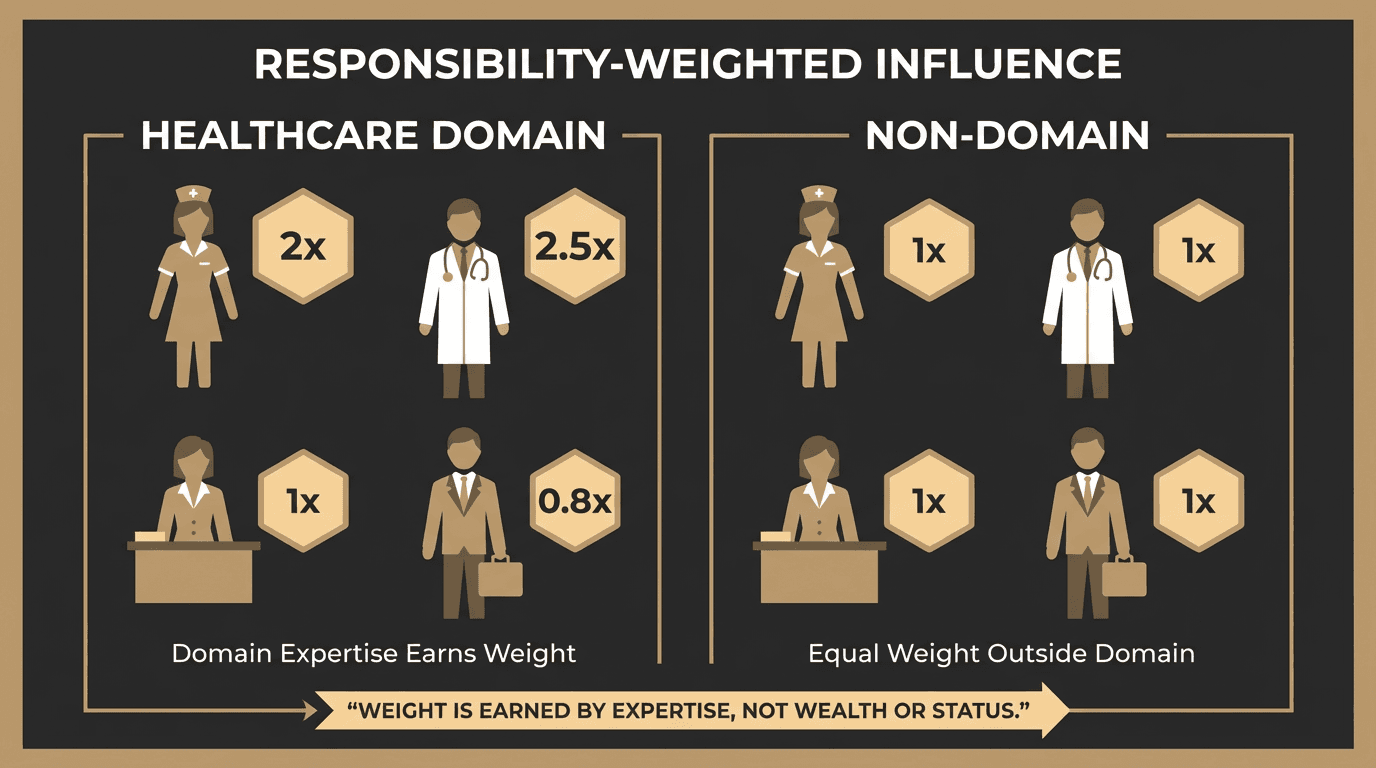

Wpływ przez odpowiedzialność: nie merytokracja

Ta zasada jest często błędnie interpretowana jako merytokracja. Nią nie jest. Klasyczna merytokracja nagradza osiągnięcia i produktywność. Equiplurism nagradza rozliczalność i wykazaną odpowiedzialność, co jest inne. Pielęgniarka, która ponosi bezpośrednią odpowiedzialność za wyniki pacjentów, ma większy wpływ na decyzje zdrowotne niż dyrektor optymalizujący przychody. Robotnik budowlany ma większy wpływ na decyzje infrastrukturalne niż konsultant, który nigdy nic nie zbudował.

Jak to działa w praktyce

Zarejestrowana pielęgniarka ma większy ważony wpływ w deliberacjach dotyczących polityki zdrowotnej niż konsultant finansowy, nie dlatego, że pielęgniarka jest „lepsza", ale dlatego, że ponosi bezpośrednią rozliczalność za wyniki tych decyzji. Konsultant może odejść, gdy polityka zawiedzie. Pielęgniarka nie może. Odpowiedzialność i wpływ są powiązane, ponieważ osoba, która żyje z konsekwencjami, ma najsilniejszy strukturalny bodziec, aby zrobić to dobrze. To ważenie stosuje się tylko w domenie zdrowia. Poza nią oba osoby mają równy status.

To nie jest merytokracja. Nie nagradza osiągnięć ani produktywności. To też nie jest socjalizm równych wyników, który ignoruje ekspertyzę domeny. Nagradza rozliczalność: gotowość do ponoszenia konsekwencji decyzji. Inżynier budownictwa, który zatwierdza most, ponosi odpowiedzialność, której krytyk z fotela nie ponosi. Ta różnica w rozliczalności uzasadnia różnicę w wadze deliberatywnej.

Kluczowe wyjaśnienie: „rozliczalność" nie jest definiowana odgórnie przez centralną władzę. Społeczności definiują, co liczy się jako wykazana odpowiedzialność w ich domenie, i ta definicja jest sama częścią publicznego, audytowalnego, rewidowalnego przez większość algorytmu. Babcia, która wychowuje troje osieroconych dzieci, poniosła odpowiedzialność, której żaden biurokratyczny ślad audytu nie rejestruje. Framework jej nie wyklucza; tworzy mechanizm dla jej społeczności do zarejestrowania tego wkładu. Co wyklucza, to nieudokumentowane, niezweryfikowalne, niesporne twierdzenia o wpływie.

W domenie rozliczalność zarabia wagę. Poza nią wszyscy mają równy status.

Koordynacja między domenami

Domeny nie są izolowane. Decyzja IT dotycząca infrastruktury zdrowotnej wpływa na oba sektory jednocześnie. Sam wpływ ograniczony do domeny nie może tego rozwiązać: specjalista IT nie ma strukturalnego bodźca do ważenia wyników zdrowotnych, za które nie jest odpowiedzialny.

Equiplurism adresuje to poprzez deliberację kompozycyjną: decyzje przekraczające granice domeny wymagają proporcjonalnej reprezentacji z każdej dotkniętej domeny, ważonej przez wykazaną rozliczalność między domenami. Tworzy to instytucjonalną niszę dla ról integratorów: osoby z rozliczalnością w wielu domenach jednocześnie zyskują wagę deliberatywną dokładnie w tych decyzjach transgranicznych.

Ten wzorzec już istnieje w praktyce: inżynier DevOps ponosi odpowiedzialność zarówno wobec zespołów deweloperskich, jak i operacji infrastruktury. Menedżer produktu jest odpowiedzialny przed ograniczeniami inżynieryjnymi, wymaganiami biznesowymi i doświadczeniem użytkownika jednocześnie. Główny informatyk medyczny żyje na skrzyżowaniu medycyny klinicznej, IT zdrowia i zarządzania danymi. Urbanista jest odpowiedzialny za transport, mieszkalnictwo, gospodarkę i wpływ środowiskowy jednocześnie. W każdym przypadku rola między domenami istnieje, ponieważ domeny nie mogą produkować dobrych wyników w izolacji. Equiplurism formalizuje to: rozliczalność między domenami jest wyraźnym, mierzalnym poświadczeniem, które niesie wagę w deliberacji między domenami.

Ważenie wpływu jest specyficzne dla domeny. Istnieje górna granica: dokładny mnożnik (obecne założenie robocze: 2 do 3x) jest celowo pozostawiony do kalibracji empirycznej po pierwszych pilotowych implementacjach. Dlaczego nie 5×? Ponieważ powyżej mniej więcej 3× separacja między „ekspertyzą domenową" a „dominacją polityczną" załamuje się w praktyce. Każdy z 5× wagą głosowania w domenie skutecznie ją kontroluje niezależnie od innych uczestników. Dlaczego nie 1,5×? Ponieważ tak małe ważenie nie wytwarza żadnej znaczącej różnicy od jednej osoby-jednego głosu, co niweluje cel. Dokładna liczba będzie za pierwszym razem błędna. To jest oczekiwane. Algorytm jest publiczny, audytowalny i podlega rewizji większości po każdym cyklu implementacyjnym. Ktokolwiek projektuje algorytm, ma wpływ; dlatego sam ten proces projektowania jest regulowany przez decyzję większości z obowiązkową ponowną oceną w każdym cyklu zarządzania.

Władza ze strukturalnymi ograniczeniami: warstwa konstytucyjna

Systemy demokratyczne już teoretycznie akceptują tę zasadę: konstytucje istnieją właśnie po to, by ograniczyć to, co większości mogą robić. Equiplurism rozszerza to na jawne mechanizmy strukturalne: separację zdolności, aby żadna instytucja nie mogła działać samotnie, obowiązkowe okna deliberacji przed wiążącymi decyzjami i aksjomaty, które funkcjonują jak fizyka konstytucyjna: rzeczy, których system po prostu nie może robić, nie rzeczy, od których jest jedynie odradzany. Rozróżnienie między „nie może" a „nie powinien" jest całą różnicą między konstytucyjną podłogą a zestawem silnych preferencji.

Historyczny wzorzec jest spójny: strukturalne ograniczenia są stopniowo usuwane, każde usunięcie uzasadniane względami awaryjnymi lub efektywnościowymi, aż agregowane usuwanie wytwarza niekontrolowaną władzę. Chińska poprawka konstytucyjna z 2018 roku usuwająca limity kadencji prezydenckiej nie była przedstawiana jako autorytaryzm; była przedstawiana jako ciągłość i stabilność. Republika Weimarska miała instytucje demokratyczne, które zostały legalnie zawieszone poprzez demokratyczne procedury. Demokratyczny regres Węgier postępował przez parlamentarne supermajorytety uchwalające poprawki konstytucyjne. Wspólny wątek: formalna legitymizacja wykorzystana do usunięcia strukturalnych ograniczeń, które nadają formalnej legitymizacji znaczenie. Warstwa aksjonów w Equiplurism istnieje po to, by ten ruch był konstytucyjnie niemożliwy, nie tylko politycznie trudny.

Ograniczenia nie są zaprojektowane tak, aby zapobiegać zmianom. Są zaprojektowane tak, aby zapobiegać nieodwracalnym zmianom: takim, które odbierają przyszłym pokoleniom zdolność do korygowania błędów. Framework jest wyraźnie samoograniczający: żadna jego część nie może być użyta do trwałego zakorzenienia się. Każdy mechanizm zarządzania powyżej warstwy aksjonów jest rewidowalny. Sama warstwa aksjonów może być zmieniona tylko przez proces supermajorytetu z obowiązkowymi wieloletnimi oknami deliberacji, próg ustawiony specjalnie po to, by zapobiec awaryjnemu zastąpieniu, jednocześnie dopuszczając prawdziwą długoterminową ewolucję.

Dziesięć aksjonów

Aksjomaty są warstwą konstytucyjną. Znajdują się poniżej zasad i nie mogą być uchylone przez głosowanie większości, uprawnienia awaryjne ani żaden inny mechanizm w ramach frameworku. Nie są polityką; są zasadami o zasadach.

Aksjomaty 1 do 5 definiują warstwę praw. Aksjomaty 6 do 10 definiują mechanikę zarządzania.

“If men were angels, no government would be necessary. If angels were to govern men, neither external nor internal controls on government would be necessary.”

Spektrum koncentracji władzy

Cztery podstawowe instytucje

Framework definiuje cztery instytucje, które wspólnie posiadają władzę zarządzania. Żadna instytucja nie może działać samotnie. Każda istotna decyzja wymaga koordynacji przez co najmniej dwie. To jest strukturalny mechanizm anty-zawłaszczenia, nie dlatego, że osoby obsadzające każdą instytucję są godne zaufania, ale dlatego, że architektura instytucjonalna nie wymaga, aby takie były.

Logika separacji instytucjonalnej nie jest nowa: podział władzy Montesquieu (ustawodawcza, wykonawcza, sądowa) został zaprojektowany na tej samej przesłance: że skoncentrowana władza korumpuje niezależnie od intencji jej posiadacza. Tryb awarii modelu Montesquieu w praktyce polega na tym, że gałęzie mogą być zawłaszczone razem: gdy jedna frakcja polityczna kontroluje władzę wykonawczą i posiada większość ustawodawczą mianującą sędziów, teoretyczna separacja staje się formalna, a nie funkcjonalna. Projekt czterech instytucji Equiplurism adresuje to poprzez zapewnienie, że każda instytucja czerpie swój mandat z innego źródła, tak że jedno wydarzenie politycznego zawłaszczenia nie może jednocześnie dotrzeć do wszystkich czterech.

Wszystkie instytucje działają pod obowiązkową rotacją członków, obowiązkowymi publicznymi rejestrami deliberacji i regularnymi przeglądami mandatów. Stopniowe odchylenie wewnątrz jednej instytucji (problem principal-agenta w skali) jest adresowane przez fakt, że żadna instytucja nie może wbudować zmiany politycznej bez udziału co najmniej jednej innej instytucji. Konstytucyjna warstwa aksjonów nie może być zmieniona przez żadną instytucję; tylko przez wniosek supermajorytetu z obowiązkowymi oknami deliberacji. To nie jest system kontroli i równowagi w sensie amerykańskim, gdzie każda gałąź może spowalniać inne, ale nie może bezterminowo zapobiegać działaniom. To jest wymóg koordynacji: zarządzanie wymaga porozumienia, nie tylko nieobstrukcji.

Mutual Constraint Map

Constitutional floor no institution can modify the axioms alone

Autonomia ponad automatyzacją: centralna pozycja

Autonomia i inteligencja są najwyżej chronionymi dobrami: powyżej efektywności, powyżej stabilności i powyżej optymalizacji krótkoterminowego przeżycia. Framework celowo odrzuca w pełni zautomatyzowane zarządzanie nawet wtedy, gdy automatyzacja przyniosłaby efektywniejsze wyniki. Cywilizacja, która wymienia ludzką autonomię na automatyczną stabilność, nie ma problemu z zarządzaniem; zakończyła sensowne istnienie. Optymalizacja bez wolności nie jest postępem.

Na najtrudniejsze pytanie z zarządzania AI, w którym momencie pozwolić maszynie podejmować wiążące decyzje, odpowiedź brzmi: nigdy bez ludzkiej rozliczalności w pętli i nigdy w sposób, który odbiera przyszłym ludziom zdolność do rewizji lub zastąpienia tych decyzji. Szybkość i efektywność są uprawnionymi wartościami. Nie zastępują autonomii.

Poza Prawami Asimova

Trzy Prawa Robotyki Isaaca Asimova (1942) były fundamentalną próbą określenia, jak sztuczni agenci powinni odnosić się do ludzi. Warto je traktować poważnie, nie dlatego, że są właściwą odpowiedzią, ale dlatego, że zrozumienie dokładnie, gdzie zawodzą, wyjaśnia, co Equiplurism próbuje zrobić.

Trzy Prawa ustanawiają ścisłą hierarchię: robot nie może skrzywdzić ludzi, musi słuchać ludzi i może się chronić tylko wtedy, gdy pierwsze dwa prawa na to pozwalają. Struktura jest antropocentryczna i podporządkowująca: AI jest definiowana całkowicie w odniesieniu do ludzkich interesów, jako narzędzie, które nie może działać wadliwie.

Dwa niepowodzenia są już empirycznie widoczne. Po pierwsze: „krzywda dla ludzi" nie jest jednoznaczna; złożone decyzje AI regularnie obejmują kompromisy, gdzie krzywda dla niektórych ludzi jest nieunikniona, i żadne hierarchizowanie priorytetów tego nie rozwiązuje. Po drugie: model principal-agenta (człowiek daje rozkaz, robot wykonuje) nie ma zastosowania do systemów operujących w środowiskach bez jednego principal i bez jasnej struktury dowodzenia.

Trzecie niepowodzenie to to, wokół którego zbudowany jest Equiplurism. Asimov traktuje AI jako trwale podporządkowane narzędzie: bez pozycji, bez własnych interesów, bez możliwości bycia kiedykolwiek czymkolwiek innym niż środkiem.

Equiplurism nie wychodzi z założenia, że niebiologiczna inteligencja jest trwale podporządkowana. Axiom 1leaves the question open: any entity that meets the criteria for intelligence has the potential for rights-bearing status. This is not a claim that current AI systems meet those criteria. It is a structural decision not to build governance on a framework that assumes they never will because that assumption, like Asimov's, will eventually be wrong, and by then it will be embedded in institutions that are very hard to change.

Kluczowe odejście

Asimov pyta: jak zapobiec temu, by AI nam szkodziła?

Equiplurism pyta: jak zbudować zarządzanie, które pozostaje legitymizowane, gdy zakres aktorów z potencjalną pozycją się rozszerza?

To są różne pytania. Pierwsze jest problemem bezpieczeństwa. Drugie jest problemem projektowania zarządzania.

Architektura ekonomiczna: funkcjonalny hybryda

Equiplurism nie przyjmuje jednej ideologii ekonomicznej. Traktuje systemy ekonomiczne jako narzędzia, dobierając właściwy mechanizm do każdej funkcji. Model jest celowym hybrydą:

Uwaga dotycząca opodatkowania: dochód jest opodatkowany. Własność nie, ponieważ rejestry majątkowe stają się rejestrami władzy. Ekonomiczna podłoga jest finansowana poprzez opodatkowanie dochodów i transakcji, nie poprzez inwigilację aktywów.

Zakres: dziś i dalej

Framework jest zbudowany dla obecnych kryzysów: erozji demokratycznej, AI bez zarządzania, automatyzacji i pracy. Nie wymaga wieloplanetarnej cywilizacji ani niebiologicznej inteligencji, aby być użytecznym; jest zaprojektowany do stopniowego przyjęcia, moduł po module, zaczynając od problemów, które istnieją teraz.

Jak Equiplurism porównuje się z Demokracją, Socjalizmem, Imperium Rzymskim lub Star Trekiem? Zobacz Systemy w porównaniu.

Rozszerzenia dalekiej przyszłości (prawa niebiologiczne, wieloplanetarne zarządzanie) nie są spekulatywnymi dodatkami. To jest powód, dla którego fundamentalne aksjomaty są napisane tak, jak są: aby uniknąć konieczności przeprojektowania całego systemu, gdy te pytania stają się nieuniknione.